TL;DR

Most experiments have multiple metrics. How do you combine them into a single ship/no-ship decision?

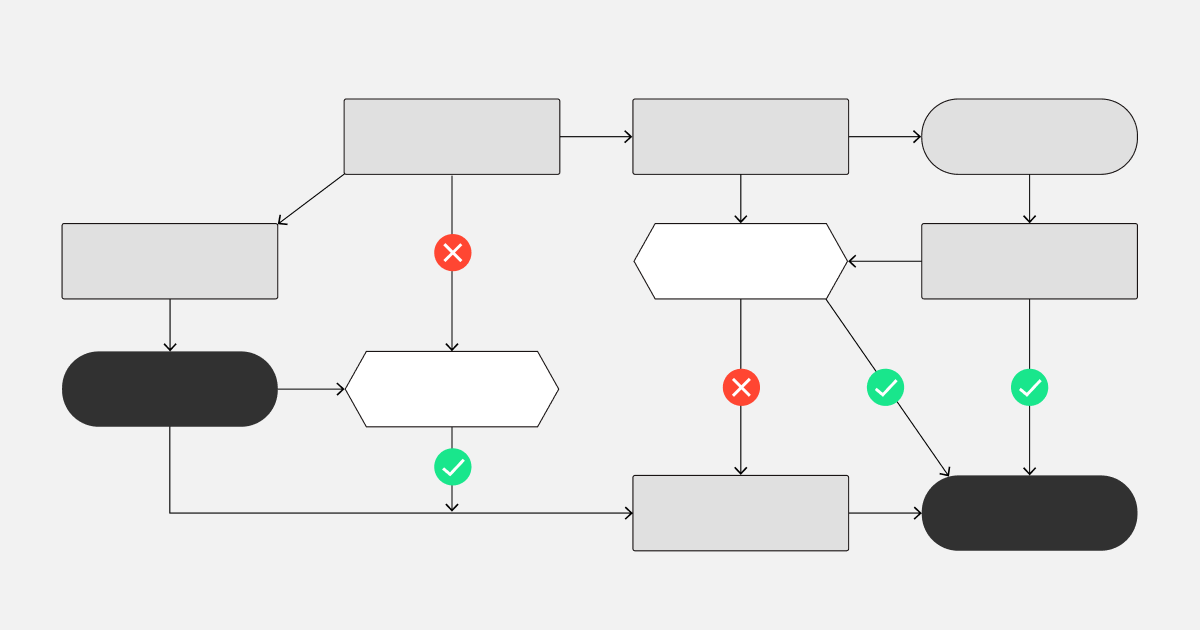

Metrics can be of different types: success metrics (superiority tests), guardrail metrics (non-inferiority tests), and others. The decision rule we use: ship if the treatment is significantly superior on at least one success metric, and significantly non-inferior on all guardrail metrics.

Under this rule, you only need to adjust the false positive rate for the number of success metrics—that's the only group where you have multiple chances to "win." You don't adjust for guardrail metrics. However, to maintain intended power, you must correct false negative rates for the number of guardrail metrics. This post explains how Spotify's decision-making engine combines multiple metrics into product decisions.

Read the full post on Spotify Engineering: Risk-Aware Product Decisions in A/B Tests with Multiple Metrics