Want to experiment like Spotify? Sign up for a 30 day free trial.

Start your free trialIn an A/B test, each statistical test comes with a risk of finding a significant result even if the treatment has no effect. If you ship whenever you find a metric with a significant improvement, more metrics simply means more chances to find a significant result, even if the treatment has no effect on any metric.

Most experimenting companies know they should correct for multiple testing to avoid inflating the false positive rate. The simplest solution is the Bonferroni correction, but Bonferroni has a reputation problem. Many statisticians or data scientists will call it too conservative, outdated, or naive, and point you toward Holm, Hommel, or Benjamini-Hochberg instead. In our recent paper, we argue that reputation is mostly undeserved.

The short version: Bonferroni gives you things the other methods don't, like consistent confidence intervals in all situations and the ability to incorporate the correction into the sample size calculation. And if you only correct for the metrics that can actually produce false positives in your shipping decision, the power cost of Bonferroni is smaller than you might expect.

The multiple testing problem

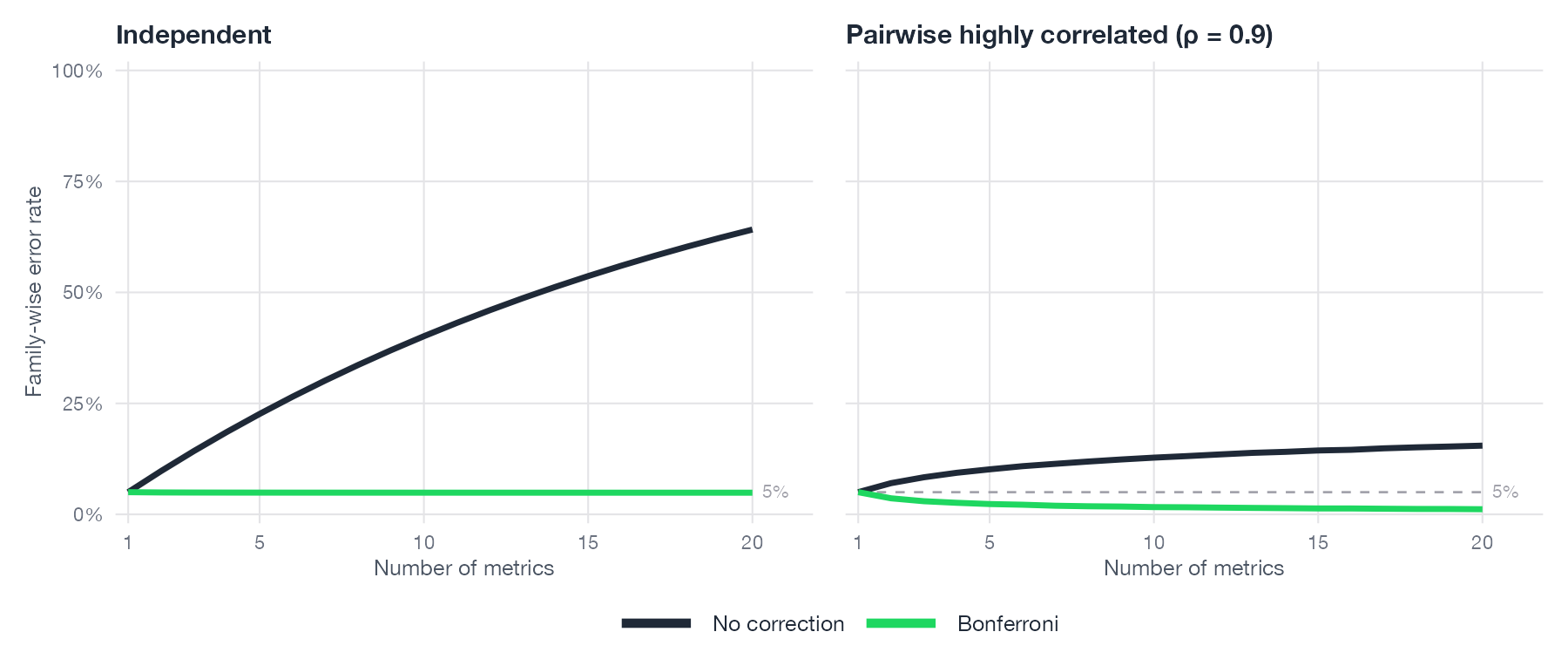

Without any multiple testing correction, the probability of at least one false positive metric result grows rapidly with the number of metrics. The figure below shows the exact relationship, computed via multivariate normal integrals, for both independent metrics and pairwise highly correlated ones (ρ = 0.9). Bonferroni bounds the false positive rate at the nominal level in both cases. Under high correlation the uncorrected rate rises more slowly, which is also why Bonferroni becomes slightly conservative there. Without any adjustment you are not running at 5%.

The correction denominator is probably smaller than you think

Bonferroni's reputation for being too conservative almost always rests on the assumption that you are dividing the false positive rate, commonly referred to as α, by the total number of metrics in the experiment. The "correction family" is the set of metrics that are entered into the correction procedure, and it directly determines the denominator. In a previous post, we showed that guardrail metrics don't need correction: they are only used to veto a ship, not to decide whether to ship, so a false positive on a guardrail cannot cause you to ship something that doesn't work. Including them in the correction only inflates the denominator. That changes how we should think about Bonferroni.

In a typical A/B test, the majority of metrics are guardrails. At Spotify, the median experiment has 2 user-defined success metrics and 4 guardrail metrics. Apply Bonferroni across all six and you get α/6. Restrict the correction to success metrics only and you get α/2. That is a large difference. With a correctly specified family, the step-down advantage of methods like Holm and Hommel also shrinks: their power gains come from reordering p-values across the correction family, and with only two metrics, there is little reordering to do.

The power gap is smaller than you might expect

Correcting only success metrics, we applied all common correction methods to ~1,300 recent experiments from Spotify (1,840 treatment-control comparisons).

| Method | Ship rate | vs. Bonferroni |

|---|---|---|

| None | 32.3% | +9.2pp |

| BH | 28.0% | +4.9pp |

| Hommel | 27.7% | +4.6pp |

| Holm | 27.7% | +4.5pp |

| Bonferroni | 23.1% | ref. |

Note: The shipping rates here differ from those reported in our post on Spotify's experiments with learning. Three things account for the difference: rollouts are included there and have a higher success rate; the set of experiments is not the same; and the decision rule differs. Here we ask only whether success metrics improved and guardrails are non-inferior. That post applied the full decision rule used in Spotify's experimentation platform Confidence. See the post for details.

Going uncorrected adds 9.2 percentage points to the ship rate, likely driven, at least in part, by false positives. Holm and Hommel, which both control the family-wise error rate (FWER: the probability of making at least one false positive shipping decision across all your experiments), ship about 4.5 percentage points more than Bonferroni. BH controls a different quantity: the false discovery rate (FDR), the expected fraction of shipped features that don't actually work. Under FWER, among experiments where the treatment has no effect, at most 5% will produce a false positive metric result. Under FDR, among the experiments that led to a decision to ship, at most 5% are expected to be false positive decisions. We include BH for comparison since it is widely used, but for product decisions FWER is typically the more appropriate target. When we ran the same analysis with guardrails incorrectly included, that gap collapsed to near zero: the step-down advantage of Holm and Hommel was entirely offset by the larger denominator.

Simulation puts this in perspective. The table below shows the power advantage of Hommel and BH over Bonferroni across two dimensions: how many metrics have a real effect, and how correlated they are (8 metrics, N = 1,000, δ = 0.10, 10,000 replications).

| Scenario | Hommel vs. Bonferroni | BH vs. Bonferroni |

|---|---|---|

| 1 of 8 metrics with a real effect, independent | +0.1pp | +0.8pp |

| 1 of 8 metrics with a real effect, ρ = 0.95 | +0.0pp | +0.0pp |

| All 8 metrics with a real effect, independent | +1.9pp | +4.7pp |

| All 8 metrics with a real effect, ρ = 0.95 | +10.2pp | +13.0pp |

When only one metric has a real effect (not unlikely in product experiments), the gap collapses to near zero regardless of correlation. It grows when more metrics move simultaneously, reaching 13 percentage points for BH when all eight metrics have a real effect, which is an unusual scenario in practice. See all simulation details and more results in the paper.

Bonferroni gives you things the other methods do not

Simultaneous confidence intervals. With Bonferroni, every metric has a valid confidence interval, and all of them hold simultaneously: the probability that all intervals cover their true effects is at least 1 − α. BH gives intervals only for metrics that were found significant, and those require distributional assumptions that don't generally hold. In practice, this means some metrics end up with no usable CI at all. Experiment results get referenced in planning and decisions long after the original readout. Bonferroni is the only method that guarantees a useful interval for every metric, every time.

Sample size calculations. Bonferroni's adjusted threshold is α/S. Plug it into any standard sample size formula and you're done. Holm and Hommel require simulation: their rejection decisions depend on the joint ordering of all test statistics, so power has no closed form. BH requires an estimate of the share of metrics with no real effect, which nobody has before running the experiment. In both cases you cannot plan sample size without a simulation. At Spotify, where experiment scheduling is a real constraint, that means you cannot use the power gain to run more experiments in parallel.

Optimal sequential testing. Most platforms let you stop experiments early. The most powerful approach is group sequential testing. Bonferroni works with it directly: each metric runs its own sequential test against its own alpha budget, with no coordination across metrics required. This matters because metrics accumulate information at very different rates. A daily engagement metric may be near full information by day four; a two-week retention metric is still at zero. Methods that exploit correlation across metrics need all metrics to accumulate data at roughly the same rate. In practice they don't, so there is no shared information fraction to exploit.

Always-valid inference (testing methods that let you check results at any time without inflating your false positive rate) works with BH and Hommel but is substantially less powerful. In our comparisons the power difference was around 15–18 percentage points, well above the 4–5 percentage point gain observed empirically from switching away from Bonferroni. A team using Bonferroni with group sequential testing ends up with more power than one using Hommel with always-valid inference, even though Hommel is the nominally more powerful correction method.

Why Spotify chose Bonferroni

Wanting more power is natural: experiment traffic is a scarce resource. But in statistics, everything comes with trade-offs. When it comes to replacing Bonferroni with nominally more powerful correction methods, those trade-offs, in our opinion and experience, speak in Bonferroni's favor.

- Once only success metrics are corrected for, the power gap between Bonferroni and more sophisticated FWER methods is 4–5 percentage points. It shrinks to near zero when few metrics have a real effect.

- Bonferroni is the only broadly implementable method that produces unconditional simultaneous CIs for every metric and supports reproducible sample size calculations.

- Guardrail metrics don't need correction (see our 2024 post on risk-aware decisions). The denominator should be the number of success metrics only.

- Bonferroni pairs with optimal group sequential testing. Switching to a more powerful correction method typically means switching to a weaker sequential approach, which often costs more power than it gains.

The paper is available on arXiv.